MMM: Does this model suffer from overfitting?

Are the models robust?

A model can sometimes spot patterns that aren’t really there.

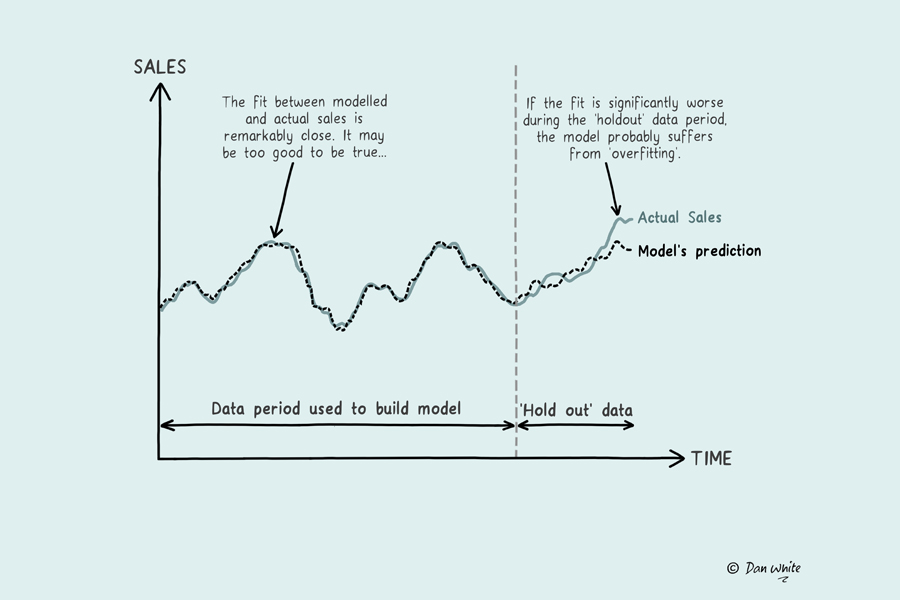

Overfitting happens when an MMM becomes too closely tailored to historical data - explaining every tiny rise and fall in sales including random noise. While this can make past performance look highly precise, it often reduces the model’s ability to predict what will happen next.

In other words, it learns the quirks of the past rather than the underlying signals and patterns that drive real growth.

This matters for OOH because the channel often appears in distinct bursts and alongside other strong sales drivers. If the model overfits those specific conditions, it may struggle to estimate performance when spend levels, timing or supporting media change. A very ‘narrow’ rule means the model doesn’t have the right fit.

A robust model should balance accuracy with generalisation - explaining the past without becoming trapped by it.

How to check for overfitting in MMM?

Overfitting is best checked using a holdout test, where part of the historical data is excluded when building the model and then used to test how accurately the model predicts unseen periods.

If predictions are strong, the model has likely learned real patterns; if not, it may be overfitted.

Does my model suffer from overfitting?

YES!

The model performs well on holdout data.

Good - it has learned genuine patterns.

You can check the model fit in hold-out periods to see if they’d align to your expectations, and confirm the inputs still make sense as to what drive sales for your category.

NO!

The model struggles on unseen data.

If the model is struggling with unseen data, ROI figures may not be stable and should be treat with caution. Working with your modeller will help remove and refine variables that are presenting incorrect, incidental patterns. Make sure you’re working to improve the data.

To understand more: